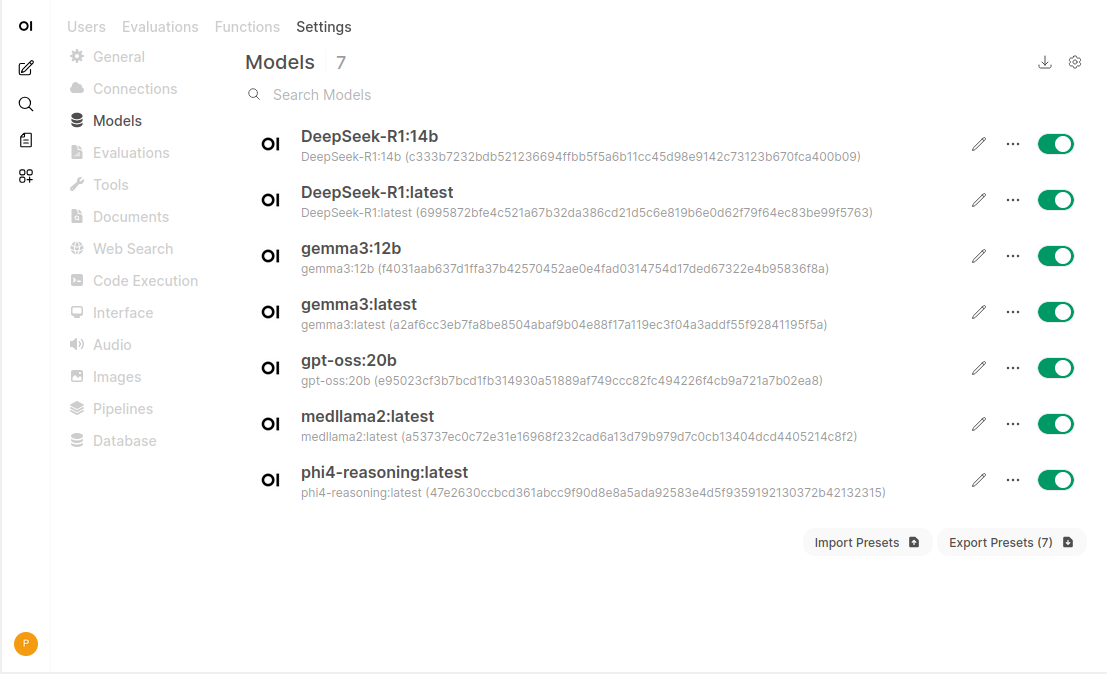

Ollama - Load models and run them

GPT-OSS:20.9b

Deepseek-R1:14.8b

Phi4 Reasoning:14.7b

Medllama2:7b

Gemma3:12.2b

These are some of the models loaded into Ollama here at electricbrain.com.au. The b at the end refers to the number of "trainable weights" that were used during model's learning phase. b = billions or 10^9. Generally speaking the higher the number the smarter the model.